A few weeks ago I was at an ISACA/ISC2 event where Chris Ulliott spoke about usable security. He argued that we (technology creators in general) ask far too much of the general public to be able to understand and use technology securely. I agree – asking any internet user to be able to spot a sophisticated phishing email by looking at email return addresses, checking URL links and possibly even looking at email headers for dodgy IP addresses is just over the top. Chris mentioned something called Nudge Theory and how we should use it more when designing security features. From Wikipedia: “Nudge theory is a concept in behavioural science, political theory and economics which argues that positive reinforcement and indirect suggestions to try to achieve non-forced compliance can influence the motives, incentives and decision making of groups and individuals, at least as effectively – if not more effectively – than direct instruction, legislation, or enforcement.”

The basic idea is to ‘nudge’ the person in doing the right thing. A commonly given example are the men’s urinals at Schiphol airport. They have drawn a little fly in the centre of the urinal, which gives something to aim at, thereby decreasing splashes. Here are some examples of good nudges used in security:

- Password strength measures – these positively reinforce good password behaviour by going amber then green when the password gets stronger

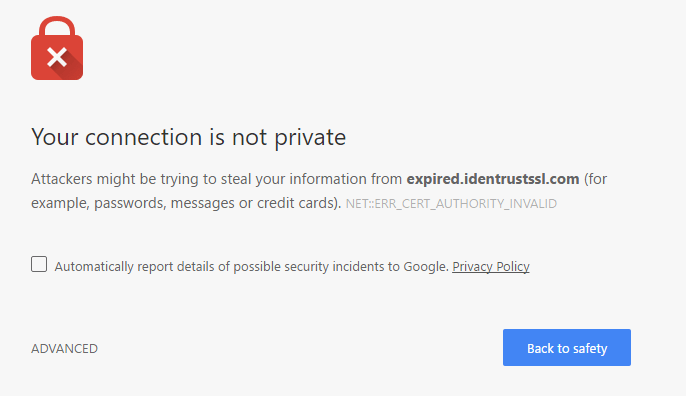

- Making the default option when presented with a security issue to not proceed, such as Google’s “go back to safety” page when presented with a digital certificate error:

I’d like to see some more of this!

We get a partial version of example 2 when it comes to emails in our spam folders, for example my email provider blocks images and sometimes malicious attachments, but how about completely blocking viewing the email completely, like with the digital certificate errors? If the email provider has put it into spam because of a DMARC failure, the email should not be viewable unless you know what you’re doing and hit ‘advanced options’.

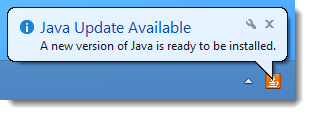

Automation is also helping – I love the fact that Google Chrome and Spotify just automatically update the application on loading. In fact, I’m so used to automated updates that the software that doesn’t do it automatically is starting to get on my nerves. It is a pain, and I must confess even I don’t always update immediately when I get told there is one, just because it’s so off putting to my user experience. I opened the application to use it immediately, not to waste another 5 minutes downloading a new version and updating it. This means users end up in a vicious cycle of being reminded there is an update at the very start (when they want to use the app the most) and put it off until the next time they use the app again.

Java is an example of irritating updates…!

Usability and security are often thought of as opposites, but I think using nudge theory we can bring them a little closer together.